EYESHADE platform integrates state-of-the-art methodologies in a framework based on reliable technologies. The system is implemented as an as-hoc proprietary framework that coordinates computational modules designed upon software packages maintained by the community.

EYESHADE is a service oriented, modular middleware entirely written in Java language.

In next sections, most important components of the systems are described.

EYESHADE monitors multiple online data-sources in real-time. Documents are downloaded and stored in local cache for asynchronous processing.

Depending on the kind of document (and data-source) different methodologies are applied. This step optimizes the document analysis with respect to its characteristics by configuring processors and selecting reference models.

The language of each document must be estimated for slang filtering and text normalization as well as for applying the proper localized lexicon and training models.

Micro-blogs and very short text (SMS, Tweets) shall be normalized in terms of slangs, shortening and emoticons. Latter we can apply standard NLP processors.

After applying the first set of text processing methods, EYESHADE evaluates extracted information for optimizing the rest of the analysis in terms of configuration (e.g. model selection) and performance optimization.

Temporal information written in natural language, such as 'few minutes ago' are recognized and tagged into the document.

Geo-Tagged documents are evaluated on a pure spatial distribution against previously analyzed documents and events in cache.

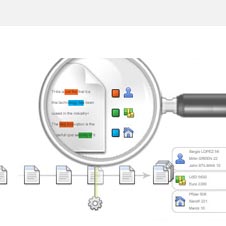

Documents are grammatically analyzed and main concepts are extracted (labeled). They will be used in the correlation analysis as well as for event classification and synthesis.

All knowledge extracted from the document is evaluated with respect to previously analyzed documents and events in cache (candidate and confirmed events both).

Information about each analyzed document are stored in local databases, valuable documents are also saved for analysis traceability.

Depending on the features of input document, such as typology, different analysis are applied in terms of processors configuration, training models and metrics.

Short text class refers to micro-blogging and channels as Twitter and SMS. The analysis must take into account communication characterized (e.g. custom slang, shortening and idioms).

So called long-text is typically gathered from blogs such as Facebook and news aggregator. It is processed with different models with respect to short text.

Some document might have a very little (or no) textual data. Instagram and Flickr photos are one example. However they still can be valuable source of information thanks to their metadata, such as the location, owner and relations with users and posts.

EYESHADE provides an experimental support to Really Simple Syndication (RSS) channels. In this case, each feed is pre-configured with some information which are exploited during analysis optimization (e.g. a-priori credibility.)

Document processing follows a similar order as presented below.

The Locale Detector NLP module of EYESHADE uses N-gram character and a Naive Bayesian filter with various normalizations and feature sampling.

Long-text support for 53 languages. Trained from Wikipedia.

Short-text support for 47 languages. Trained from micro-blogging datasets.

Long-text was tested against news articles from Google, 91.9% (improving) against very short Tweets.

The algorithm is optimized for speed, but provides also the estimation probability.

Here is a list of languages code recognized:

af ar bg bn cs da de el en es et fa fi fr gu he

hi hr hu id it ja kn ko lt lv mk ml mr ne nl no pa

pl pt ro ru sk sl so sq sv sw ta te th tl tr uk ur

vi zh-cn zh-tw

New communication channels, such as SMS messages and Tweets, has resulted in a new form of written text (sometimes referred as "Microtext"), which is very different from well-written text. Typically these messages have limited length and may contain misspellings, slang, or abbreviations.

EYESHADE Text Normalization NLP module addresses the problem related to chat-speak style of communication. Language probability map and a-priori information about data-source are exploited for translating and labeling the expressions which might be misleading for further processing.

Classification is typically a high computational complexity task, especially when targeted to semantic analysis. This preliminary classification task exploits and extends previously extracted knowledge for adding valuable classification data to the document. Only fast and optimized algorithms are considered at this stage, a more complex classification is eventually applied latter in the process.

EYESHADE applies fast string matching and text search algorithms against user and context defined keywords. Keywords sets are typically labeled for classification.

EYESHADE processes input documents (e.g. tweets) in work queues. The initial priority of the document is assigned at this stage and will be eventually updated during the processing. Spatial and Time information are also evaluated.

Depending on data-source and its author, the document is tagged with an a-priori credibility weight.

Research has proven that some kinds of slang and punctuation are typically not used in disease and emergency related contexts. EYESHADE labels each document with sentiment and opinion related metrics.

Metadata information of the input document, such as

date and time at which a Tweet is posted, are not necessary

related to the temporal reference of document contents.

For example: "I've seen a car crash 5 minutes ago."

The Time Tagger NLP module of EYESHADE extracts temporal expressions from the document and tags absolute time annotations (in TIMEX3 standard).

Here is a list of the 11 languages recognized:

English, German, Dutch, Vietnamese, Arabic, Spanish, Italian, French, Chinese, Russian, and Croatian.

Documents providing spatial information, as they contains textual references to places or they are geo-tagged, are evaluated (in parallel to NLP processing) by a statistical module that takes into account the pure space-time evolution of the stream. The approach assumes that peaks and changes to the trend in the time-space frequency domain might be directly related to the life-cycle on events.

In particular, this information might be exploited for localizing sub-events.

Named-entity recognition (NER) is an important and computationally heavy subtask of the information extraction process. NER labels sequences of words in a text which are the names of things, such as person organizations, locations, expressions of times, quantities.

EYESHADE comes with many English data models built-in and corpus of other languages might be added as well.

Each input document is evaluated against recently processed information (documents) and cached events including (still under estimation) draft events and confirmed (or reliable) emergencies.

Classification and labeled data estimated in previous steps is exploited for comparing topic of the document with current trends, cached events and recently processed documents.

The affinity of input document with respect to candidate and active event is evaluated and will be exploited later during aggregation.

Documents having spatial information are evaluated in space-time domain by means of STSS algorithms and clustering methodologies.

Normalized metadata entries of input document are correlated with recently processed documents and cached events. Field are grouped in static classes, or features, which are latter weighted together.

EYESHADE platform is a relatively compact software (less than 100 Mb), but it relies on many data-sources and training models which can take Gigabytes.

EYESHADE uses multiple database backend to store temporary and persistent information.

Many training and lexicon files are stored in the file-system and loaded into memory for performance optimization.

The SQL backend provides persistence support for storing indexes of classified documents and temporary cache. MySQL and PostgreSQL are supported.

The NOSQL backend is used for storing input documents cache, candidate output events and their history.